Qwertyman for Monday, April 6, 2026

A REEL circulated recently online explaining the origins of the ubiquitous Internet symbol @ for “at,” tracing it back to medieval monks seeking a shortcut and to merchants using it to mean “at the rate of,” and then finally to a coding convention adopting it to link a computer user’s name to his or her domain or location.

I found it fascinating because I’m something of a geek, a failed scientist who had to switch from Engineering to English because I couldn’t hack the math, who ended up channeling his digital side (as opposed to the analog, which collects vintage fountain pens and antiquarian books) into a decades-long devotion to Apple computers and to nearly everything Apple produced. I even chaired the Philippine Macintosh Users Group (PhilMUG) back in the mid-1990s when the handful of us felt like early Christians in a pagan universe. We had monthly get-togethers in small restaurants to unbox the latest SCSI peripherals and discuss the newest features of System 8.0. I prided myself in the fact that I could strip and reassemble a PowerBook Duo practically blindfolded.

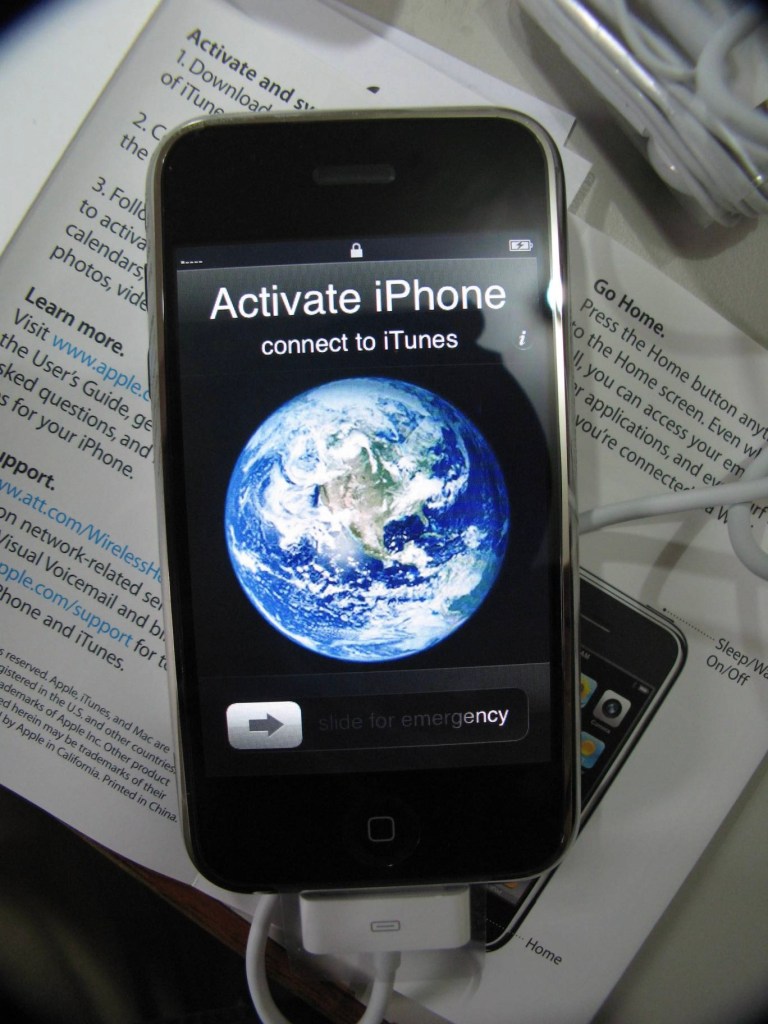

I mention this because Apple has just marked its 50th anniversary, having been founded in 1976 by Steve Jobs, Steve Wozniak, and Ronald Wayne with a machine cobbled together in a garage. After slogging through its first decades as a distant competitor to the more popular Windows PC, Apple finally achieved global domination after coming out with such game-changers as the colorful iMac, the iPod that made you smile the minute you put your earphones on, the ultraportable iPad, and of course the indispensable iPhone.

As industry observers noted early on, the genius of Steve Jobs and Apple wasn’t just in its products, but in creating the need for them; you didn’t know how irresistible the iPhone was until you held one. Apple and its passion for personal computing—not just the hardware but an entire lifestyle ecology that integrated communication and writing with music and photography—arguably changed the world, or at least hastened its evolution.

But enough of the proselytization. I’m not writing this piece to sell you another Mac, which God knows Apple doesn’t need another endorser for. Instead, that half-century of Apple that just went by gave me pause to wonder where all that early joy of tech has gone, and indeed where technology has led us and will yet take us.

Like many early adopters, as we were called then, I recall the inimitable thrill of trying out a new machine, an operating system, or a program—something to make life and work easier and faster than before, another bold step into the future, a declaration of faith in the power of technology to transform life and indeed the world itself. New technology arrived with the presumption of goodness and optimism—that it would bring relief to global poverty and hunger, find a cure for cancer and other human ailments, improve education, and generate jobs for billions; it would draw more people into the circle of development, empower the oppressed, and induce social equity. With the advent of the Internet, more doors and barriers came crashing down. We could express ourselves publicly, bypass the traditional gatekeepers of information, challenge authority, build communities of common interest, expose falsehood and spread the truth, and create a truly transparent, interconnected, and progressive global society.

The kind of tools that Apple and its competitors produced were supposed to assist that project. They did—and again they did not. Instead of tearing down walls between people, the Internet raised new ones, behind the anonymity of which we could tear each other down. Computers and smartphones now facilitate disinformation, human trafficking, money laundering, and all manner of scamming.

Worst of all, technology has made it easier to wage war and kill people (like it always has). From Desert Storm back in the early 1990s to the present Iran War, military assaults and even mass slaughter have assumed the sanitizing cloak of an e-sport, a posture Trump and his war gamers have actively adopted, reducing casualties to memes. Indeed the US-Israeli attack on Iran has now been called “the first AI war,” as an article by Michael Brown on Forbes.com substantiates:

“When I became the Director of the Defense Innovation Unit at the Pentagon in 2018, Project Maven was already underway. Long before LLMs, DIU was supporting Project Maven with several vendors to improve computer vision, an AI capability to distinguish among objects in satellite imagery to save analysts studying pixels…. That legacy led to Palantir’s Maven Smart System, today’s cornerstone of the U.S. military’s AI-powered operation. Maven fuses satellite imagery, drone video feeds, radar data, and signals intelligence into a single interface, allowing operators to classify targets, recommend weapons, and generate strike packages in near real time. The results have been staggering: more than 1,000 targets were struck in the first 24 hours of the campaign, a tempo that would have been unthinkable with purely human targeting processes. That tempo has been maintained with only 10% of the human analysts that would have previously been required to strike 1,000 targets daily.

“Yet the system’s limitations are equally revealing. Maven’s overall accuracy hovers around 60 percent, compared to 84 percent for human analysts. Palantir’s CTO nonetheless declared it ‘the first large-scale combat operation driven by AI,’ a characterization that raises questions about the ethics of AI-driven targeting and the adequacy of civilian protection safeguards.”

Of course it would be unfair to lay responsibility for this on the doorstep of Apple or other tech giants today—barring those who, unlike Anthropic, have actively lent their resources to Trump’s war machine. The companies known to have supported Israel’s military capabilities include Palantir, Microsoft, Google, IBM, and G42 (and yes, that’s according to AI). While the biblical prophets called for swords to be beaten into plowshares, somebody found a way to turn high tech’s plowshares into guns and missiles.

And then again, as the gun rights advocates always say, “Guns don’t kill—people do.” With some people being so stupid and devoid of conscience, why should we even wonder if and when AI will work better than the human brain? That already happened, more than fifty years ago.

Email me at jdalisay@mac.com and visit my blog at http://www.penmanila.ph.